Asynchronous HTTP APIs with Azure Container Apps jobs

Monday, August 12, 2024

When building HTTP APIs, it can be tempting to synchronously run long-running tasks in a request handler. This approach can lead to slow responses, timeouts, and resource exhaustion. If a request times out or a connection is dropped, the client won't know if the operation completed or not. For CPU-bound tasks, this approach can also bog down the server, making it unresponsive to other requests.

In this post, we'll look at how to build an asynchronous HTTP API with Azure Container Apps. We'll create a simple API that implements the Asynchronous Request-Reply pattern: with the API hosted in a container app and the asynchronous processing done in a job. This approach provides a much more robust and scalable solution for long-running tasks.

Long-running API requests in Azure Container Apps

Azure Container Apps is a serverless container platform. It's ideal for hosting a variety of workloads, including HTTP APIs.

Like other serverless and PaaS platforms, Azure Container Apps is designed for short-lived requests — its ingress currently has a maximum timeout of 4 minutes. As an autoscaling platform, it's designed to scale dynamically based on the number of incoming requests. When scaling in, replicas are removed. Long-running requests can terminate abruptly if the replica handling the request is removed.

Azure Container Apps jobs

Azure Container Apps has two types of resources: apps and jobs. Apps are long-running services that respond to HTTP requests or events. Jobs are tasks that run to completion and can be triggered by a schedule or an event.

Jobs can also be triggered programmatically. This makes them a good fit for implementing asynchronous processing in an HTTP API. The API can start a job execution to process the request and return a response immediately. The job can then take as long as it needs to complete the processing. The client can poll a status endpoint on the app to check if the job has completed and get the result.

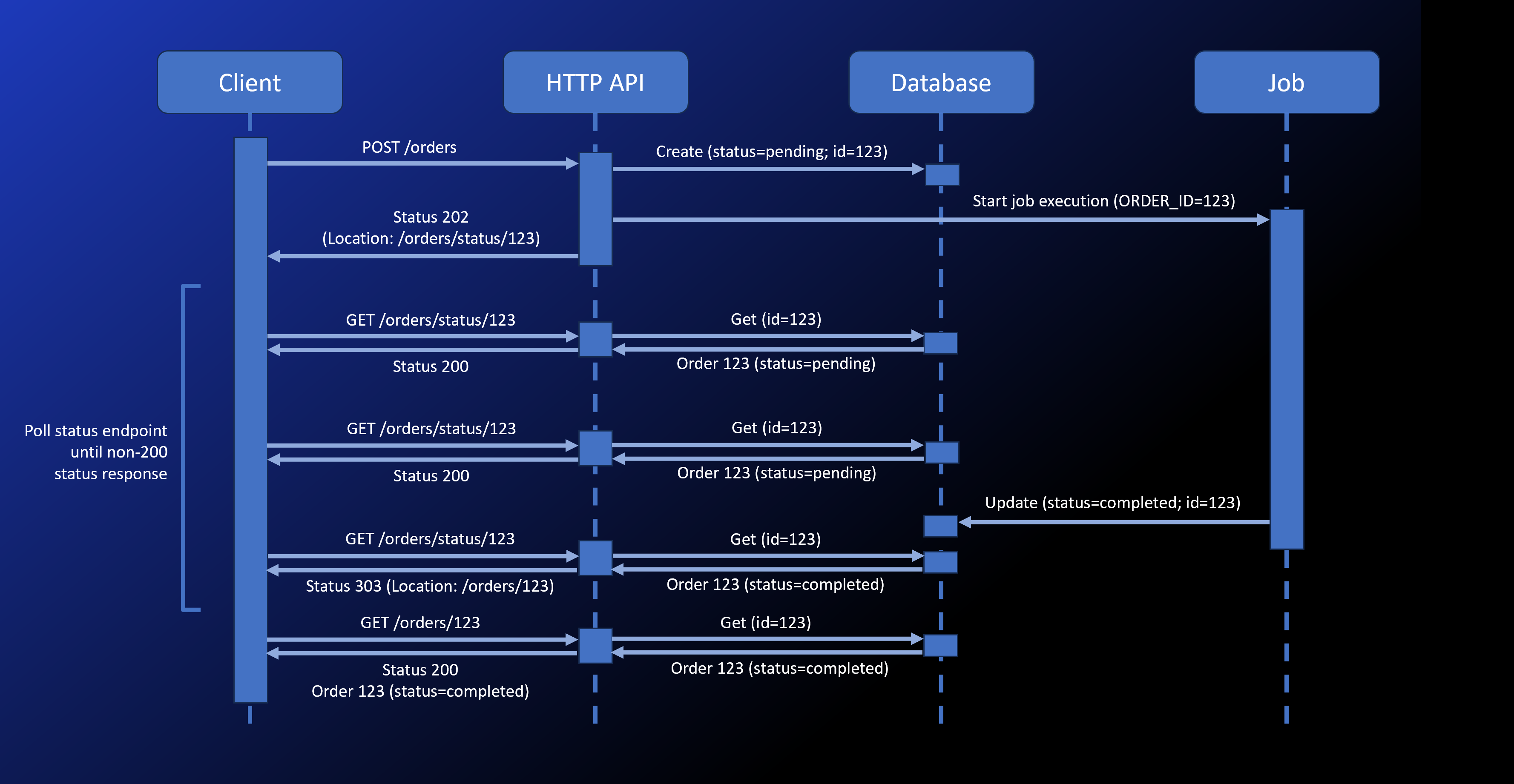

The Asynchronous Request-Reply pattern

Asynchronous Request-Reply is a common pattern for handling long-running operations in HTTP APIs. Instead of waiting for the operation to complete, the API returns a status code indicating that the operation has started. The client can then poll the API to check if the operation has completed.

Here's how the pattern applies to Azure Container Apps:

- The client sends a request to the API (hosted as a container app) to start the operation.

- The API saves the request (our example uses Azure Cosmos DB), starts a job to process the operation, and returns a

202 Accepted status code with a Location header pointing to a status endpoint.

- The client polls the status endpoint. While the operation is in progress, the status endpoint returns a

200 OK status code with a Retry-After header indicating when the client should poll again.

- When the operation is complete, the status endpoint returns a

303 See Other status code with a Location header pointing to the result. The client automatically follows the redirect to get the result.

Async HTTP API app

You can find the source code in this GitHub repository.

The API is a simple Node.js app that uses Fastify. It demonstrates how to build an async HTTP API that accepts orders and offloads the processing of the orders to jobs. The app has a few simple endpoints.

POST /orders

This endpoint accepts an order in its body. It saves the order to Cosmos DB with a status of "pending" and starts a job execution to process the order.

fastify.post('/orders', async (request, reply) => {

const orderId = randomUUID()

// save order to Cosmos DB

await container.items.create({

id: orderId,

status: 'pending',

order: request.body,

})

// start job execution

await startProcessorJobExecution(orderId)

// return 202 Accepted with Location header

reply.code(202).header('Location', '/orders/status/' + orderId).send()

})

We'll take a look at the job later in this article. In the above code snippet, startProcessorJobExecution is a function that starts the job execution. It uses the Azure Container Apps management SDK to start the job.

const credential = new DefaultAzureCredential()

const containerAppsClient = new ContainerAppsAPIClient(credential, subscriptionId)

// ...

async function startProcessorJobExecution(orderId) {

// get the existing job's template

const { template: processorJobTemplate } =

await containerAppsClient.jobs.get(resourceGroupName, processorJobName)

// add the order ID to the job's environment variables

const environmentVariables = processorJobTemplate.containers[0].env

environmentVariables.push({ name: 'ORDER_ID', value: orderId })

const jobStartTemplate = { template: processorJobTemplate }

// start the job execution with the modified template

const jobExecution = await containerAppsClient.jobs.beginStartAndWait(

resourceGroupName, processorJobName, {

template: processorJobTemplate,

}

)

}

The job takes the order ID as an environment variable. To set the environment variable, we start the job execution with a modified template that includes the order ID.

We use managed identities to authenticate with both the Azure Container Apps management SDK and the Cosmos DB SDK.

GET /orders/status/:orderId

The previous endpoint returns a 202 Accepted status code with a Location header pointing to this status endpoint. The client can poll this endpoint to check the status of the order.

This request handler retrieves the order from Cosmos DB. If the order is still pending, it returns a 200 OK status code with a Retry-After header indicating when the client should poll again. If the order is complete, it returns a 303 See Other status code with a Location header pointing to the result.

fastify.get('/orders/status/:orderId', async (request, reply) => {

const { orderId } = request.params

// get the order from Cosmos DB

const { resource: item } = await container.item(orderId, orderId).read()

if (item === undefined) {

reply.code(404).send()

return

}

if (item.status === 'pending') {

reply.code(200).headers({

'Retry-After': 10,

}).send({ status: item.status })

} else {

reply.code(303).header('Location', '/orders/' + orderId).send()

}

})

GET /orders/:orderId

This endpoint returns the result of the order processing. The status endpoint redirects to this resource when the order is complete. It retrieves the order from Cosmos DB and returns it.

fastify.get('/orders/:orderId', async (request, reply) => {

const { orderId } = request.params

// get the order from Cosmos DB

const { resource: item } = await container.item(orderId, orderId).read()

if (item === undefined || item.status === 'pending') {

reply.code(404).send()

return

}

if (item.status === 'completed') {

reply.code(200).send({ id: item.id, status: item.status, order: item.order })

} else if (item.status === 'failed') {

reply.code(500).send({ id: item.id, status: item.status, error: item.error })

}

})

Order processor job

The order processor job is a another Node.js app. As it's just a demo, it just waits a while, updates the order status in Cosmos DB, and exits. In a real-world scenario, the job would process the order, update the order status, and possibly send a notification.

We deploy it as a job in Azure Container Apps. The POST /orders endpoint above starts the job execution. The job takes the order ID as an environment variable and uses it to update the order status in Cosmos DB.

Like the API app, the job uses managed identities to authenticate with Azure Cosmos DB.

The code is in the same GitHub repository.

import { DefaultAzureCredential } from '@azure/identity'

import { CosmosClient } from '@azure/cosmos'

const credential = new DefaultAzureCredential()

const client = new CosmosClient({

endpoint: process.env.COSMOSDB_ENDPOINT,

aadCredentials: credential

})

const database = client.database('async-api')

const container = database.container('statuses')

const orderId = process.env.ORDER_ID

const orderItem = await container.item(orderId, orderId).read()

const orderResource = orderItem.resource

if (orderResource === undefined) {

console.error('Order not found')

process.exit(1)

}

// simulate processing time

const orderProcessingTime = Math.floor(Math.random() * 30000)

console.log(`Processing order ${orderId} for ${orderProcessingTime}ms`)

await new Promise(resolve => setTimeout(resolve, orderProcessingTime))

// update order status in Cosmos DB

orderResource.status = 'completed'

orderResource.order.completedAt = new Date().toISOString()

await orderItem.item.replace(orderResource)

console.log(`Order ${orderId} processed`)

HTTP client

To call the API and wait for the result, here's a simple JavaScript function that works just like fetch but waits for the job to complete. It also accepts a callback function that's called each time the status endpoint is polled so you can log the status or update the UI.

async function fetchAndWait() {

const input = arguments[0]

let init = arguments[1]

let onStatusPoll = arguments[2]

// if arguments[1] is not a function

if (typeof init === 'function') {

init = undefined

onStatusPoll = arguments[1]

}

onStatusPoll = onStatusPoll || (async () => {})

// make the initial request

const response = await fetch(input, init)

if (response.status !== 202) {

throw new Error(`Something went wrong\nResponse: ${await response.text()}\n`)

}

const responseOrigin = new URL(response.url).origin

let statusLocation = response.headers.get('Location')

// if the Location header is not an absolute URL, construct it

statusLocation = new URL(statusLocation, responseOrigin).href

// poll the status endpoint until it's redirected to the final result

while (true) {

const response = await fetch(statusLocation, {

redirect: 'follow'

})

if (response.status !== 200 && !response.redirected) {

const data = await response.json()

throw new Error(`Something went wrong\nResponse: ${JSON.stringify(data, null, 2)}\n`)

}

// redirected, return final result and stop polling

if (response.redirected) {

const data = await response.json()

return data

}

// the Retry-After header indicates how long to wait before polling again

const retryAfter = parseInt(response.headers.get('Retry-After')) || 10

// call the onStatusPoll callback so we can log the status or update the UI

await onStatusPoll({

response,

retryAfter,

})

await new Promise(resolve => setTimeout(resolve, retryAfter * 1000))

}

}

To use the function, we call it just like fetch. We pass an additional argument that's a callback function that's invoked each time the status endpoint is polled.

const order = await fetchAndWait('/orders', {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({

"customer": "Contoso",

"items": [

{

"name": "Apple",

"quantity": 5

},

{

"name": "Banana",

"quantity": 3

},

],

})

}, async ({ response, retryAfter }) => {

const { status } = await response.json()

const requestUrl = response.url

messagesDiv.innerHTML += `Order status: ${status}; retrying in ${retryAfter} seconds (${requestUrl})\n`

})

// display the final result

document.querySelector('#order').innerHTML = JSON.stringify(order, null, 2)

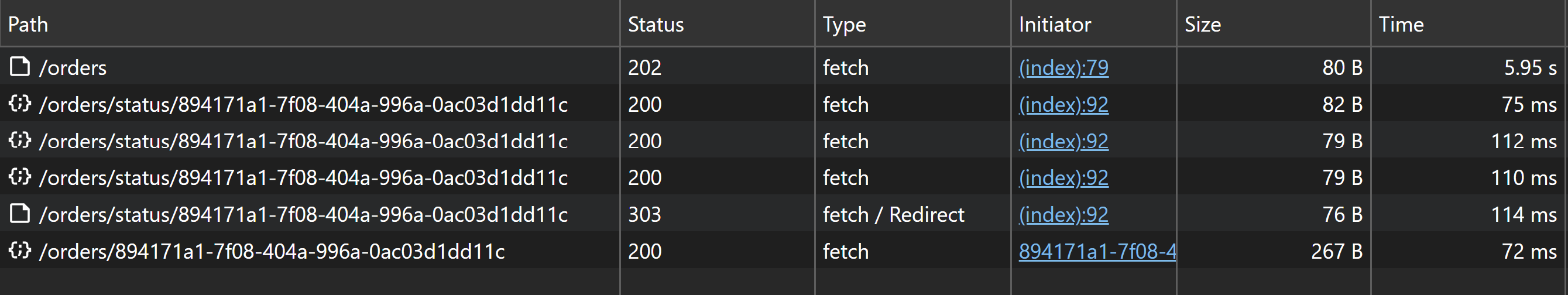

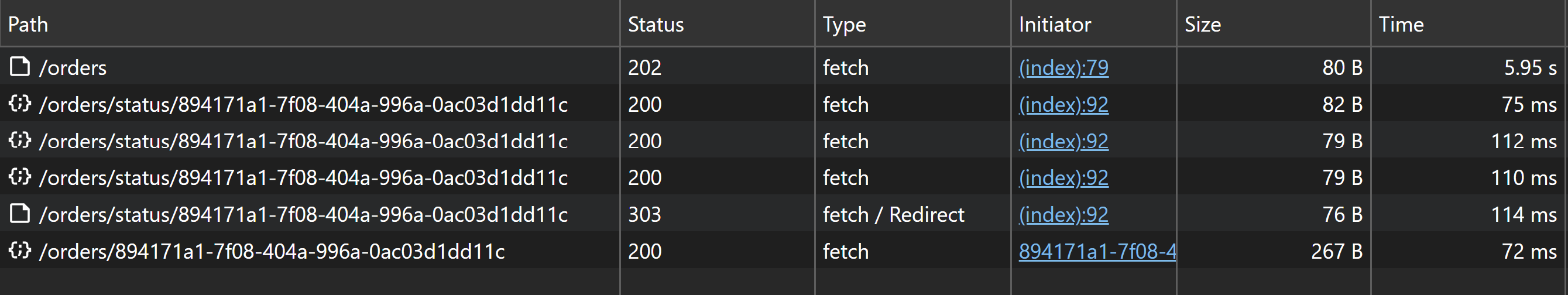

If we run this in the browser, we can open up dev tools and see all the HTTP requests that are made.

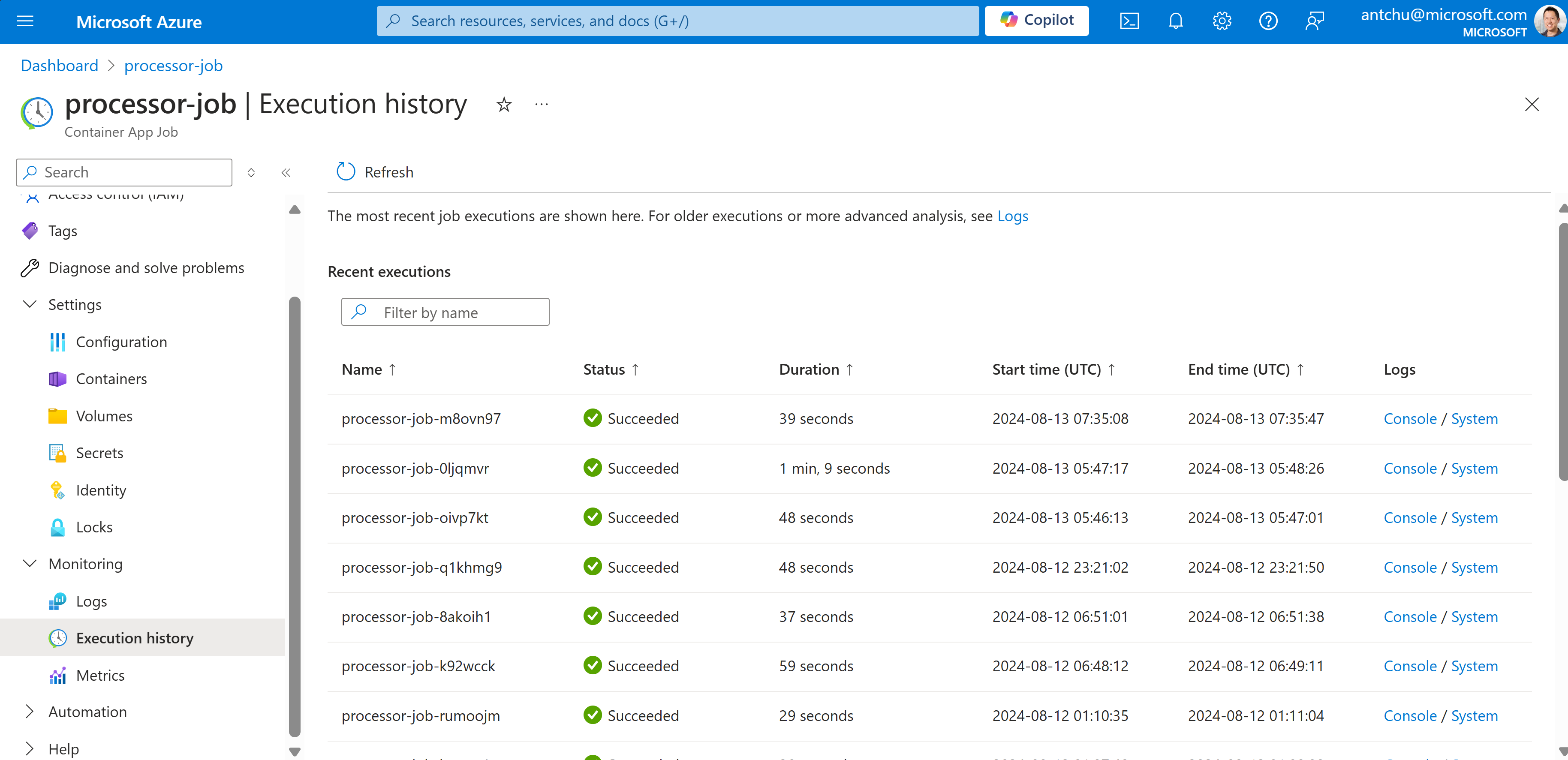

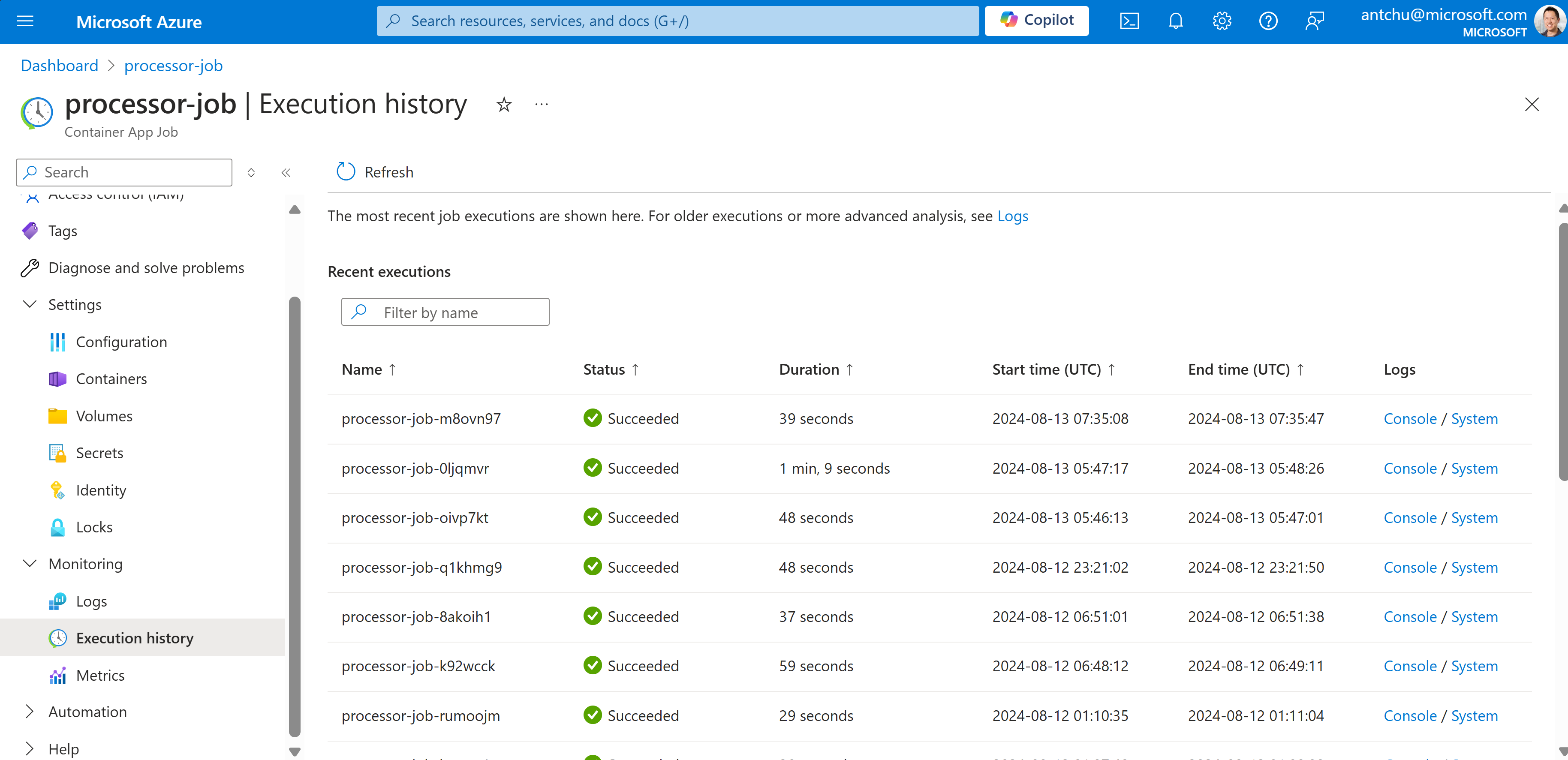

In the portal, we also can see the job execution history.

Conclusion

With the Asynchronous Request-Reply pattern, we can build robust and scalable HTTP APIs that handle long-running operations. By using Azure Container Apps jobs, we can offload the processing to a job execution that doesn't consume resources from the API app. This robust approach allows the API to respond quickly and handle many requests concurrently.

When building HTTP APIs, it can be tempting to synchronously run long-running tasks in a request handler. This approach can lead to slow responses, timeouts, and resource exhaustion. If a request times out or a connection is dropped, the client won't know if the operation completed or not. For CPU-bound tasks, this approach can also bog down the server, making it unresponsive to other requests.

In this post, we'll look at how to build an asynchronous HTTP API with Azure Container Apps. We'll create a simple API that implements the Asynchronous Request-Reply pattern: with the API hosted in a container app and the asynchronous processing done in a job. This approach provides a much more robust and scalable solution for long-running tasks.

Long-running API requests in Azure Container Apps

Azure Container Apps is a serverless container platform. It's ideal for hosting a variety of workloads, including HTTP APIs.

Like other serverless and PaaS platforms, Azure Container Apps is designed for short-lived requests — its ingress currently has a maximum timeout of 4 minutes. As an autoscaling platform, it's designed to scale dynamically based on the number of incoming requests. When scaling in, replicas are removed. Long-running requests can terminate abruptly if the replica handling the request is removed.

Azure Container Apps jobs

Azure Container Apps has two types of resources: apps and jobs. Apps are long-running services that respond to HTTP requests or events. Jobs are tasks that run to completion and can be triggered by a schedule or an event.

Jobs can also be triggered programmatically. This makes them a good fit for implementing asynchronous processing in an HTTP API. The API can start a job execution to process the request and return a response immediately. The job can then take as long as it needs to complete the processing. The client can poll a status endpoint on the app to check if the job has completed and get the result.

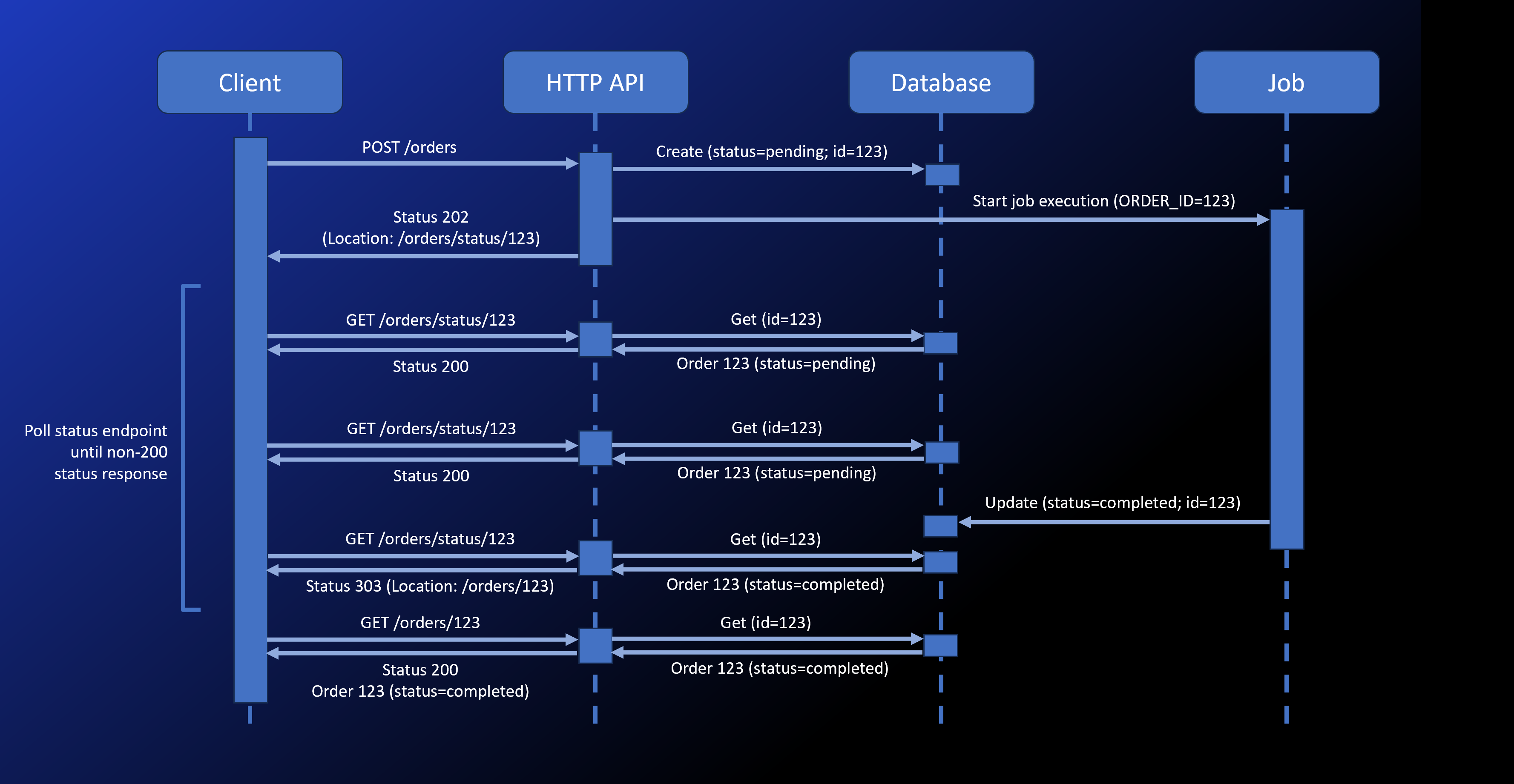

The Asynchronous Request-Reply pattern

Asynchronous Request-Reply is a common pattern for handling long-running operations in HTTP APIs. Instead of waiting for the operation to complete, the API returns a status code indicating that the operation has started. The client can then poll the API to check if the operation has completed.

Here's how the pattern applies to Azure Container Apps:

- The client sends a request to the API (hosted as a container app) to start the operation.

- The API saves the request (our example uses Azure Cosmos DB), starts a job to process the operation, and returns a

202 Acceptedstatus code with aLocationheader pointing to a status endpoint. - The client polls the status endpoint. While the operation is in progress, the status endpoint returns a

200 OKstatus code with aRetry-Afterheader indicating when the client should poll again. - When the operation is complete, the status endpoint returns a

303 See Otherstatus code with aLocationheader pointing to the result. The client automatically follows the redirect to get the result.

Async HTTP API app

You can find the source code in this GitHub repository.

The API is a simple Node.js app that uses Fastify. It demonstrates how to build an async HTTP API that accepts orders and offloads the processing of the orders to jobs. The app has a few simple endpoints.

POST /orders

This endpoint accepts an order in its body. It saves the order to Cosmos DB with a status of "pending" and starts a job execution to process the order.

fastify.post('/orders', async (request, reply) => {

const orderId = randomUUID()

// save order to Cosmos DB

await container.items.create({

id: orderId,

status: 'pending',

order: request.body,

})

// start job execution

await startProcessorJobExecution(orderId)

// return 202 Accepted with Location header

reply.code(202).header('Location', '/orders/status/' + orderId).send()

})

We'll take a look at the job later in this article. In the above code snippet, startProcessorJobExecution is a function that starts the job execution. It uses the Azure Container Apps management SDK to start the job.

const credential = new DefaultAzureCredential()

const containerAppsClient = new ContainerAppsAPIClient(credential, subscriptionId)

// ...

async function startProcessorJobExecution(orderId) {

// get the existing job's template

const { template: processorJobTemplate } =

await containerAppsClient.jobs.get(resourceGroupName, processorJobName)

// add the order ID to the job's environment variables

const environmentVariables = processorJobTemplate.containers[0].env

environmentVariables.push({ name: 'ORDER_ID', value: orderId })

const jobStartTemplate = { template: processorJobTemplate }

// start the job execution with the modified template

const jobExecution = await containerAppsClient.jobs.beginStartAndWait(

resourceGroupName, processorJobName, {

template: processorJobTemplate,

}

)

}

The job takes the order ID as an environment variable. To set the environment variable, we start the job execution with a modified template that includes the order ID.

We use managed identities to authenticate with both the Azure Container Apps management SDK and the Cosmos DB SDK.

GET /orders/status/:orderId

The previous endpoint returns a 202 Accepted status code with a Location header pointing to this status endpoint. The client can poll this endpoint to check the status of the order.

This request handler retrieves the order from Cosmos DB. If the order is still pending, it returns a 200 OK status code with a Retry-After header indicating when the client should poll again. If the order is complete, it returns a 303 See Other status code with a Location header pointing to the result.

fastify.get('/orders/status/:orderId', async (request, reply) => {

const { orderId } = request.params

// get the order from Cosmos DB

const { resource: item } = await container.item(orderId, orderId).read()

if (item === undefined) {

reply.code(404).send()

return

}

if (item.status === 'pending') {

reply.code(200).headers({

'Retry-After': 10,

}).send({ status: item.status })

} else {

reply.code(303).header('Location', '/orders/' + orderId).send()

}

})

GET /orders/:orderId

This endpoint returns the result of the order processing. The status endpoint redirects to this resource when the order is complete. It retrieves the order from Cosmos DB and returns it.

fastify.get('/orders/:orderId', async (request, reply) => {

const { orderId } = request.params

// get the order from Cosmos DB

const { resource: item } = await container.item(orderId, orderId).read()

if (item === undefined || item.status === 'pending') {

reply.code(404).send()

return

}

if (item.status === 'completed') {

reply.code(200).send({ id: item.id, status: item.status, order: item.order })

} else if (item.status === 'failed') {

reply.code(500).send({ id: item.id, status: item.status, error: item.error })

}

})

Order processor job

The order processor job is a another Node.js app. As it's just a demo, it just waits a while, updates the order status in Cosmos DB, and exits. In a real-world scenario, the job would process the order, update the order status, and possibly send a notification.

We deploy it as a job in Azure Container Apps. The POST /orders endpoint above starts the job execution. The job takes the order ID as an environment variable and uses it to update the order status in Cosmos DB.

Like the API app, the job uses managed identities to authenticate with Azure Cosmos DB.

The code is in the same GitHub repository.

import { DefaultAzureCredential } from '@azure/identity'

import { CosmosClient } from '@azure/cosmos'

const credential = new DefaultAzureCredential()

const client = new CosmosClient({

endpoint: process.env.COSMOSDB_ENDPOINT,

aadCredentials: credential

})

const database = client.database('async-api')

const container = database.container('statuses')

const orderId = process.env.ORDER_ID

const orderItem = await container.item(orderId, orderId).read()

const orderResource = orderItem.resource

if (orderResource === undefined) {

console.error('Order not found')

process.exit(1)

}

// simulate processing time

const orderProcessingTime = Math.floor(Math.random() * 30000)

console.log(`Processing order ${orderId} for ${orderProcessingTime}ms`)

await new Promise(resolve => setTimeout(resolve, orderProcessingTime))

// update order status in Cosmos DB

orderResource.status = 'completed'

orderResource.order.completedAt = new Date().toISOString()

await orderItem.item.replace(orderResource)

console.log(`Order ${orderId} processed`)

HTTP client

To call the API and wait for the result, here's a simple JavaScript function that works just like fetch but waits for the job to complete. It also accepts a callback function that's called each time the status endpoint is polled so you can log the status or update the UI.

async function fetchAndWait() {

const input = arguments[0]

let init = arguments[1]

let onStatusPoll = arguments[2]

// if arguments[1] is not a function

if (typeof init === 'function') {

init = undefined

onStatusPoll = arguments[1]

}

onStatusPoll = onStatusPoll || (async () => {})

// make the initial request

const response = await fetch(input, init)

if (response.status !== 202) {

throw new Error(`Something went wrong\nResponse: ${await response.text()}\n`)

}

const responseOrigin = new URL(response.url).origin

let statusLocation = response.headers.get('Location')

// if the Location header is not an absolute URL, construct it

statusLocation = new URL(statusLocation, responseOrigin).href

// poll the status endpoint until it's redirected to the final result

while (true) {

const response = await fetch(statusLocation, {

redirect: 'follow'

})

if (response.status !== 200 && !response.redirected) {

const data = await response.json()

throw new Error(`Something went wrong\nResponse: ${JSON.stringify(data, null, 2)}\n`)

}

// redirected, return final result and stop polling

if (response.redirected) {

const data = await response.json()

return data

}

// the Retry-After header indicates how long to wait before polling again

const retryAfter = parseInt(response.headers.get('Retry-After')) || 10

// call the onStatusPoll callback so we can log the status or update the UI

await onStatusPoll({

response,

retryAfter,

})

await new Promise(resolve => setTimeout(resolve, retryAfter * 1000))

}

}

To use the function, we call it just like fetch. We pass an additional argument that's a callback function that's invoked each time the status endpoint is polled.

const order = await fetchAndWait('/orders', {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({

"customer": "Contoso",

"items": [

{

"name": "Apple",

"quantity": 5

},

{

"name": "Banana",

"quantity": 3

},

],

})

}, async ({ response, retryAfter }) => {

const { status } = await response.json()

const requestUrl = response.url

messagesDiv.innerHTML += `Order status: ${status}; retrying in ${retryAfter} seconds (${requestUrl})\n`

})

// display the final result

document.querySelector('#order').innerHTML = JSON.stringify(order, null, 2)

If we run this in the browser, we can open up dev tools and see all the HTTP requests that are made.

In the portal, we also can see the job execution history.

Conclusion

With the Asynchronous Request-Reply pattern, we can build robust and scalable HTTP APIs that handle long-running operations. By using Azure Container Apps jobs, we can offload the processing to a job execution that doesn't consume resources from the API app. This robust approach allows the API to respond quickly and handle many requests concurrently.